Introduction

Welcome to ‘The Future of Text’ Journal.

Why read this?

This Journal serves as a monthly record of the activities of the Future Text Lab, in concert with the annual Future of Text Symposium and the annual ‘The Future of Text’ book series. We have published two volumes of ‘The Future of Text’† and this year we are starting with a new model where articles will first appear in this Journal over the year and will be collated into the third volume of the book. We expect this to continue going forward.

This Journal is distributed as a PDF which will open in any standard PDF viewer. If you choose to open it in our free Reader’ PDF viewer for macOS (download†), you will get useful extra interactions including these features enabled via embedded Visual-Meta:

- the ability to fold the journal text into an outline of headings.

- pop-up previews for citations/endnotes showing their content in situ.

- a Find, with text selected, locates all the occurrences of that text and collapses the document view to show only the matches, with each displayed in context.

- if the selected text has a Glossary entry, that entry will appear at the top of the screen.

- inclusion of Visual-Meta. See:

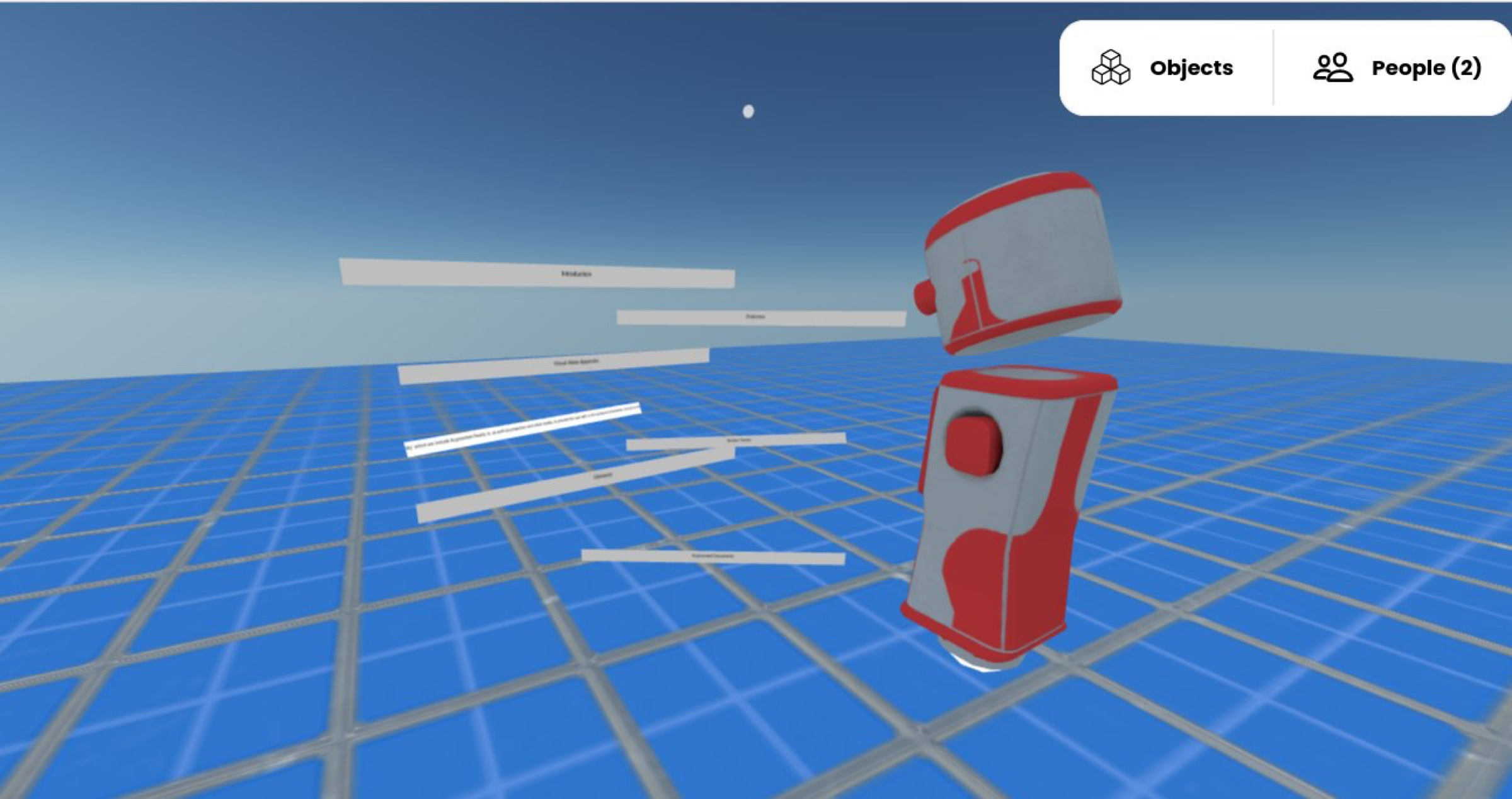

- You can spread the document out in and have it float in the air where you want it to.

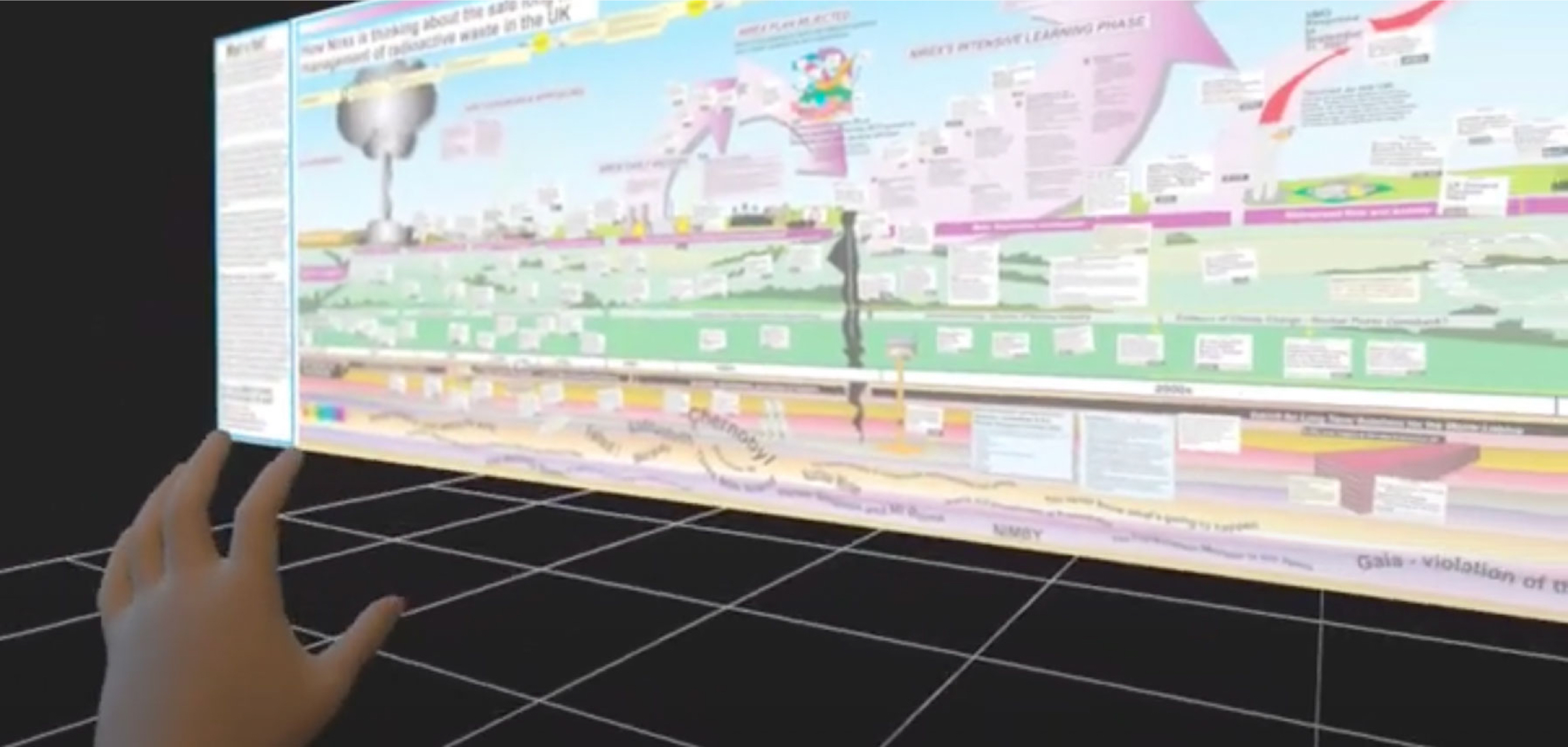

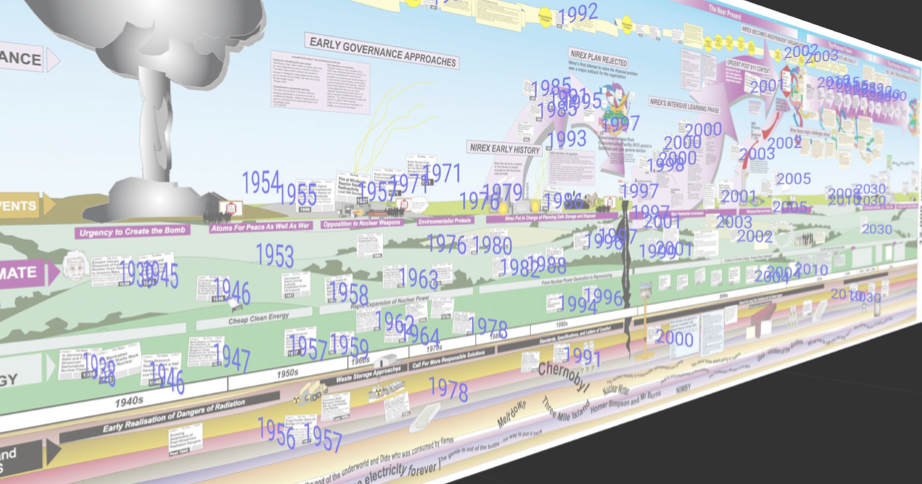

- Any included diagrams can be pulled out and enlarged to fill a wall, where you can discuss it and annotate it.

- Any references from that document can be visualised as lines going into the distance and a tug on any line will bring the source into view.

- You can throw your virtual index cards straight to a huge wall and you and the facilitator can both move the cards around, as well as save their positions and build sets of layouts.

- Lines showing different kinds of connections can be made to appear between the cards.

- If the cards have time information they can also be put on a timeline, if they have geographic information they can be put on a map, even a globe.

- If there is related information in the document you brought, or in any relevant documents, they can be connected to this constellation of knowledge.

- You open a document in the knowledge space and you:

- Pull the table of contents to one side for easy overview.

- Throw the glossary into another part of the room.

- Throw all the sources of the document against a wall.

- You manipulate the document with interactions even Tom Cruise would have been jealous of in Minority Report†.

- Journal data (though in a limited form apart from Mark’s work)

- Financial data (stocks, currencies)

- Weather information

- Historical news

- Twitter feeds

- Our own dialogue in text (rough) and video and audio forms

- Websites and web searches

- Likely anything Siri can provide

- Maths equations and formulas

- Scientific data

- Wikipedia

- Wikipedia sidebars

- Wikidata

- Laws

- Individual health data/performance

- Instant messages (at least in our own world)

- How would this button know how to send to your environment?

- How much would you like to work on for this to work and how much might we have to pay someone to do?

- I would like to have this button on our (and in the future, any) website and in my software Reader. How much work would it be for my programmer to integrate it do you think?

- you can go to a text passage, which may mean ‘flying’ up and down if there is a virtual ground level

- you can use a virtual telescope, and read at a distance.

- texts can come to you, as copies or by moving the originals, potentially with parts sticking down through a virtual floor or being folded.

- texts can be ‘chopped’ up in pages placed horizontally, to avoid long texts altogeth

- We would have access to organised citations, in order to build an ‘outside’ library with connections, maybe gathering access to the books via Google Books or something.

- We would also have the Map, though I’m not sure how we’d use it at this point because I don’t use it as the editor yet, but maybe we could figure out how to make it useful for a published work.

- Images are available in native formats and sizes.

- Endnotes could have interesting ways of being accessed.

- We could separate bullets out, plus sentence or heading above them.

- I think that “NFTs are the future” after listening understanding “@naval’s belief that NFTs are necessary technology for the metaverse” in “this podcast”

- I love “A Case of You” by “James Blake”, and “this is my favourite live performance”

- We could look back in time and see how someone we admire learnt about a topic. In the first case above, we can understand why @naval believes what he does about NFTs and the metaverse. We can see what influenced him in the past and read those same sources. Further, we could then build on his ideas, and add our own ideas, for example “someone needs to build a platform for trading NFTs in the metaverse”. Others could build off of our ideas, and others could follow their journey as they learn about something new.

- We can understand how an artist we admire created something. In the second case above, we can see when James Blake first listened to the original “A Case of You” by Joni Mitchell, what he thought and felt about it, and why he decided to perform a cover. We could use that understanding to explore Joni Mitchell’s back catalog, or be inspired to create our own content, for example by performing a cover. Followers of Joni Mitchell and James Blake could easily see our covers by following edges along the graph.

http://visual-meta.info to learn more about Visual-Meta.

Frode Alexander Hegland & Mark Anderson Editors, with thanks to the Future Text Lab community: Adam Wern, Alan Laidlaw, Brandel Zachernuk, Fabien Benetou, Brendan Langen, Christopher Gutteridge, David De Roure, Dave Millard, Ismail Serageldin, Keith Martin, Mark Anderson, Peter Wasilko, Rafael Nepô and Vint Cerf.

Jad Esber: Guest Presentation

Transcript

Video: https://youtu.be/i_dZmp59wGk?t=513

Jad Esber: Today I’ll be talking a little bit about both, sort of, algorithmic, and human curation. I’ll be using a lot of metaphors, as a poet that’s how I tend to explain things. The presentation won’t take very long, and I hope to have a longer discussion.

On today’s internet, algorithms have taken on the role of taste-making, but also the authoritative role of gatekeeping through the anonymous spotlighting of specific content. If you take the example of music, streaming services have given us access to infinite amounts of music. There are around 40,000 songs uploaded on Spotify every single day. And given the amount of music circulating on the internet, and how it’s increasing all the time, the need for compression of cultural data and the ability to find the essence of things becomes more focal than ever. And because automated systems have taken on that role of taste-making, they have a profound effect on the social and cultural value of music, if we take the example of music. And so, it ends up influencing people’s impressions and opinions towards what kind of music is considered valuable or desirable or not.

If you think of it from an artist’s perspective, despite platforms subverting the power of labels, who are our previous gatekeepers and taste-makers, and claiming to level the playing field, they’re creating new power structures. With algorithms and editorial teams controlling what playlists we listen to, to the point where artists are so obsessed with playlist placement, that it’s dictating what music they create. So if you listen to the next few new songs that you hear on a streaming service, you might observe that they’ll start with a chorus, they’ll be really loud, they’ll be dynamic, and that’s because they’re optimising for the input signals of algorithms and for playlist placement. And this is even more pronounced on platforms like TikTok, which essentially strip away all forms of human curation. And I would hypothesise that, if Amy Winehouse released Back in Black today, it wouldn’t perform very well because of its pacing, the undynamic melody. It wouldn’t have pleased the algorithms. It wouldn’t have sold the over 40 million copies that it did.

And another issue with algorithms is churning standardised recommendations that are flattening individual tastes, they’re encouraging conformity and stripping listeners of social interaction. We’re all essentially listening to the same songs.

There are actually millions of songs, on ‘Spotify’, that have been played only partially, or never at all. And there’s a service, which is kind of tongue-in-cheek, but it’s called ‘Forgotify’, that exists to give the neglected songs another way to reach you. So if you know are looking for a song that’s never been played, or hardly been played, you can go to ‘Forgotify’ to listen to it. So, the answer isn’t that we should eliminate algorithms or machine curation. We actually really need machine and programmatic algorithms to scale, but we also need humans to make it real. So, it’s not one or the other. If we solely rely on algorithms to understand the contextual knowledge around, let’s say, music, that’ll be impossible. Because, at present, human effort, popularity bias, which means only recommending popular stuff, and the cold start problem is unavoidable with music recommendation, even with very advanced hybrid collaborative filtering models that Spotify implies. So pairing algorithmic discovery with human curation will remain the only option. And with human curation allowing for the recalibration of recommendation through contextual reasoning and sensitivity, qualities that only humans really can do. Today this has caused the formation of new power structures that place the careers of merging artists, let’s say on Spotify, in the hands of a very small set of curators that live at the major streaming platform.

Spotify actually has an editorial team of humans that adds context around algorithms and curates playlists. So they’re very powerful. But as a society, you continuously look to others, to both validate specific tastes, and to inspire us with new tastes. If I were to ask you how you came up discovered a new article or a new song, it’s likely that you have heard of it from someone you trust.

People have looked to tastemakers to provide recommendations continuously. But part of the problem is that curation still remains an invisible labour. There aren’t really incentive structures that allow curators to truly thrive. And it’s something that a lot of blockchain advocates, people who believe in Web3, think that there is an opportunity for that to change with this new tech. But beyond this, there is also a really big need for a design system that allows for human-centred discovery. A lot of people have tried, but nothing has really emerged.

I wanted to use a metaphor and sort of explore what bookshelves represent as a potential example of an alternative design system for discovery, human-curated discovery. So, let’s imagine the last time you visited the bookstore. The last time I visited the bookstore, I might have gone in to search for a specific book. Perhaps it was to seek inspiration for another read. I didn’t know what book I wanted to buy. Or maybe, like me, you went into the bookstore for the vibes, because the aesthetic is really cool, and being in that space signals something to people. This book store over here is one I used to frequent in London. I loved just going to hang out there because it was awesome, and I wanted to be seen there. But similarly, when I go and visit someone’s house, I’m always on the lookout for what’s on their bookshelf, to see what they’re reading. That’s especially the case for someone I really admire or want to get to know better. And by looking at their bookshelf, I get a sense of what they’re interested in, who they are. But it also allows for a certain level of connection with the individual that’s curating the books. They provide a level of context and trust that the things on their bookshelves are things that I might be interested in. And I’d love to, for example, know what’s on Frode’s bookshelf right now. But there’s also something really intimate about browsing someone’s bookshelf, which is essentially a public display of what they’re consuming or looking to consume. So, if there’s a book you’ve read, or want to read, it immediately triggers common ground. It triggers a sense of connection with that individual. Perhaps it’s a conversation. I was browsing Frode’s bookshelf and I came across a book that I was interested in, perhaps, I start a conversation around it. So, along with discovery, the act of going through someone’s bookshelf, allows for that context, for connection, and then, the borrowing of the book creates a new level of context. I might borrow the book and kind of have the opportunity to read through it, live through it, and then go back and have another conversation with the person that I borrowed it from. And so recommending a book to a friend is one thing, but sharing a copy of that book, in which maybe you’ve annotated the text that stands out to you, or highlighted key parts of paragraphs, that’s an entirely new dimension of connection. What stood out to you versus what stood out to them. And it’s really important to remember that people connect with people at the end of the day and not just with content. Beyond the books on display, the range of authors matters. And even the effort to source the books matters. Perhaps it’s an early edition of a book. Or you had to wait in line for hours to get an autographed copy from that author.

That level of effort, or the proof of work to kind of source that book, also signals how intense my fanship is, or how important this book is to me.

And all that context is really important. And what’s really interesting is also that the bookshelf is a record of who I was, and also who I want to be. And I really love this quote from Inga Chen, she says, “What books people buy are stronger signals of what topics are important to people, or perhaps what topics are aspirationally important, important enough to buy a book that will take hours to read or that will sit on their shelf and signal something about them.” If we compare that to some platforms, like Pinterest for example. Pinterest exists to not just curate what you’re interested in right now, but what’s aspirationally interesting to you. It’s the wedding dresses that you want to buy or the furniture that you want to purchase. So there’s this level of, who you want to become, as well, that’s spoken to through that curation of books, that lives on your bookshelf.

I wanted to come back and connect this with where we’re at with the internet today and this new realm of ownership and people are calling social objects. And so, if we take this metaphor of a bookshelf and apply it to any other space that houses cultural artefacts, the term people have been using for these cultural artefacts is social objects. We can think of, beyond books, the shirts we wear, the posters we put on our walls, the souvenirs we pick up, they’re all, essentially, social objects. And they showcase what we care about and the communities that we belong to. And, at their core, these social objects act as a shorthand to tell people about who we are. They are like beacons that send out the signal for like-minded people to find us. If I’m wearing a band shirt, then other fans of that artist, that band will, perhaps, want to connect with me. On the internet, these social objects take the form of URLs, of JPEGs, articles, songs, videos, and there are platforms like Pinterest, or Goodreads, or Spotify, and countless others that centre around some level of human-curated discovery, and community around these social objects. But what’s really missing from our digital experience today is this aspect of ownership that’s rooted in the physicality of the books on your bookshelves. We might turn to digital platforms as sources of discovery and inspiration, but until now we haven’t really been able to attach our identities to the content we consume, in a similar way that we do to physical owned goods. And part of that is the public histories that exist around the owned objects that we have, in the context that isn’t really provided in the limited UIs that a lot of our devices allow us to convey. So, a lot of what’s happening today around blockchains is focused on how can we track provenance or try to verify that someone was the first to something, and how do we, in a way, track a meme through its evolution. And there are elements of context that are provided through that sort of tech, although limited.

There is discussion around ownership as well. Like, who owns what, but also portability. The fact that I am able to take the things that I own with me from one space to another, which means that I’m no longer leaving fragments of my identity siloed in these different spaces, but there’s a sense of personhood. And so these questions of physical ownership are starting to enter the digital realm. And we’re at an interesting time right now, where a lot of, I think, design systems will start to pop up, that emulate a lot of what it feels like to work, to walk into a bookstore, or to browse someone’s bookshelf. And so, I wanted to leave us with that open question, and that provocation, and transition to more of a discussion. That was everything that I had to present.

So, I will pause there and pass it back to Frode, and perhaps we can just have a discussion from now on. Thank you for listening.

Dialogue

Video: https://youtu.be/i_dZmp59wGk?t=1329

Frode Hegland: Thank you very much. That was interesting and provocative. Very good for this group. I can see lots of heads are wobbling, and it means there’s a lot of thinking. But since I have the mic I will do the first question, and that is:

Coming from academia, one thing that I’m wondering what you think and I’m also wondering what the academics in the room might think. References, as bookshelf, or references as showing who you are, basically trying to cram things in there to show, not necessarily support your argument, but support your identity, do you have any comments on that?

Jad Esber: So, I think that’s a really interesting thought. When I was thinking of bookshelves, they do serve almost like references, because of the thoughts and the insights that you share. If you’re sitting in the bedroom, in the living room, and you’re sharing some thoughts, perhaps you’re having a political conversation, and you point at the book on your shelf that perhaps you read, that’s like, “Hey, this thought that I’m sharing, the reference is right there.” It sort of does add, or kind of provide a baseline level of trust that this insight or thought has been memorialised in this book that someone chose to publish, and it lives on my bookshelf. There is some level of credibility that’s built by attaching your insider thoughts to that credible source. So, yeah, there’s definitely a tie between references, I guess, in citations to the physical setting of having a conversation and a book living on your bookshelf, that you point to. I think that’s an interesting connection beyond just existing as social objects that speak to your identity, as well. That’s another extension as well. I think that’s really interesting.

Frode Hegland: Thanks for that. Bob. But afterward, Fabien, if you could elaborate on your comment in the chat, that would be really great. Bob, please.

Video: https://youtu.be/i_dZmp59wGk?t=1460

Bob Horn: Well, the first thing that comes to mind is:

Have you looked at three-dimensional spaces on the internet? For example, Second Life, and what do you think about that?

Jad Esber: Yeah. I mean, part of what people are proposing for the future of the internet is what I’m sure you guys have discussed in past sessions. Perhaps is like the metaverse, right? Which is essentially this idea of co-presence, and some level of physicality bridging the gap between being co-presented in a physical space, in a digital space. Second Life was a very early example of some version of this. I haven’t spent too many iterations thinking about virtual spaces and whether they are apt at emulating the feeling of walking into a bookstore, or leafing through a bookshelf. But I think if you think about the sensory experience of being able to browse someone’s bookshelf, there are, obviously, parallels to the visual sensory experience. You can browse someone’s digital library. Perhaps there’s some level of tactile, you can pick up books, but it’s not really the same. But it’s missing a lot of the other sensory experiences, which provide a level of context. But certainly, allow for that serendipitous discovery that another doesn’t. Like the feed dynamic isn’t necessarily the most serendipitous. It’s it is to a degree, but it’s also very crafted. And it there isn’t really a level of play when you’re going around and looking at things that you do on a bookshelf, or in a bookstore. And so, Second Life does allow for that. Moving around, picking things up and exploring that you do in the physical world. So, I think it’s definitely bridging the gap to an extent, but missing a lot of the sensory experiences that we have in the physical world. I think we haven’t quite thought about how to bridge that gap. I know there are projects that are trying to make our experience of digital worlds more sensory, but I’m not quite sure how close we’ll get. So, that’s my initial thought, but feel free to jump in, by the way, I’d welcome other opinions and perspectives as well.

Bob Horn: We’ve been discussing this a little bit, partially, at my initiative, and mostly at Frode’s urging us on. And I haven’t been in Second Life for, I don’t know, six, or seven, or eight years. But I have a friend who has, who’s there all the time, and says that there are people who have their personal libraries there. That there are university libraries. Their whole geographies, I’m told, of libraries. So, it may be an interesting angle, at some point. And if you do, I’d be interested, of course, in what you came up with.

Jad Esber: Totally. Thank you for that pointer, yeah. There’s a multitude of projects right now that focus on extending Second Life, and kind of bringing in concepts around ownership, and physicality, and interoperability, so that the things that you own in Second Life, you can take with you, from that world, into others. Which, sort of, does bridge the gap between the physical world and the digital, because it doesn’t live within that siloed space, but actually is associated to you, and can be taken from one space to another. Very early in building that out, but that’s a big promise of Web3, so. There’re a lot of hands. So, I’ll pause there.

Frode Hegland: Yeah, Fabien, if you could elaborate on what you were talking about, virtual bookshelf.

Video: https://youtu.be/i_dZmp59wGk?t=1737

Fabien Benetou: Yep. Well, actually it will be easier if I’ll share my screen. I don’t know if you can see. I have a Wiki that I’ve been maintaining for 10 plus years. And on top, you can see the visualisation of the edits when I started for this specific page. And these pages, as I was saying in the chat, are sadly out of date, that’s been 10 years, actually, just for this page. But I was listing the different books I’ve read, with the date, what page I was. And if I take a random book, I have my notes, the (indistinct), and then the list of books that are related, let’s say, to the book. I don’t have it in VR or in 3D yet, but it’s definitely from that point wouldn’t be too hard, so… And I was thinking, I have personally a, kind of, (indistinct) that they’re hidden, but I have some books there and I have a white wall there and I love both because when I bring back if either I’m in someone else’s room or my own room. Usually, if I’m in my own room, I’m excited by the book I’ve read or the one that I haven’t read yet. So it brings a lot of excitement. But also, if I have a goal in mind, a task at hand, let’s say, a presentation on Thursday, a thing that I haven’t finished yet, then it pulls me to something else. Whereas if I have the white wall it’s like a blank slate. And again, if I need to pull some references on books and whatnot. So, I always have that tension. And what usually happens is, when I go in a physical bookstore, or library, or bookshop, or friends, serendipity is indeed, it’s not the book I came here for, it’s the one next to it. Because I’m not able to make the link, and usually, if the creation has been done right, and arguably the algorithm, if it’s not actually computational, let’s say, if you use the doing annotation or any other basically annotation system, in order to sort the books or their references, then there should be some connection that were not obvious in the first place. So, to me, that’s the most, I’d say, exciting aspect of that.

Jad Esber: This is amazing, by the way, Fabien. This is incredible that you’ve built this over a decade, that’s so cool. I think what’s also really interesting to extend on that thought, and just to kind of like, “yes” and that, there is a certain level of, I mean, I think what you’ve built is very utilitarian, but also the existence of the bookshelf as an expression of identity, I think is interesting. So, beyond just organising the books, and keeping them, storing them in a utilitarian way, then serving as signals of your identity, I think are really interesting. And so, I think a lot of platforms today cater to the utility. If you think about Pocket or even Goodreads to an extent, there is potentially an identity angle to Goodreads versus Tumblr, back in the day, or Myspace or (indistinct) which were much more identity-focused. So there is this distinction of utilitarian, organising, keeping things, annotating, etc. for yourself. But there’s also this identity element of like, by curating I am expressing my identity. And I think that’s also really interesting.

Frode Hegland: Brandel, you’re next. But just wanted to highlight today to the new people in the room including you, Jad. This community, at the moment, is really leaning towards AR and VR. But in a couple of years’ time, what can happen? And that also includes projections and all kinds of different things, so we really are thinking connected with the physical, but also virtual on top. Brandel, please.

Video: https://youtu.be/i_dZmp59wGk?t=1984

Brandel Zachernuk: So, I was really hooked on when you said that you like to be seen in that London bookstore. And it made me think about the fact that on Spotify, on YouTube, on Goodreads for the most part, we’re not seen at all, unless we’re on the specific, explicit page that is there for the purposes of representing us. So, YouTube does have a profile page. But nothing about the rest of our onward activity actually is represented within the context of that. If you compare that to being in the bookstore, you have your clothes on, you have your demeanour, and you can see the other participants. There’s a mutuality to being present in it, where you get to see that, rather than merely that a like button maybe is going up in real-time. And so, I’m wondering what kind of projective representation do you feel we need within the broader Web? Because even making a new curation page still silos that representation with an explicit place, and doesn’t give you the persistent reference that is your own physicality, and body wandering around the various places that you want to be at and be seeing at. Now, do you see that as something that there’s a solve to? Or how do you think about that?

Jad Esber: Yeah, I think Bob alluded to this to a degree with Second Life. And the example of Second Life, I think the promise of co-presence in the digital world is really interesting, and potentially could solve for this, part of. I also go to cafes, not just because I like the coffee, because I like the aesthetic, and the opportunities to rub shoulders with other clientele that might be interesting, because this cafe is frequented by this sort of folk. And that doesn’t exist online as much. I mean, perhaps, if you’re going to a forum, and you frequent a specific subreddit, there is an element of like, “Oh, I’ll meet these types of folks or this chat group, and perhaps, I’ll be able to converse with these types of folks and be seen here.” But I think, how long you spend there, how you show up there, beyond just what you write. That all matters. And how you’re browsing, there’s a lot of elements that are really lost in current user interfaces. So, I think, yeah, Second Life-like spaces might solve for that, and allow us to present other parts of ourselves in these spaces, and measure time spent, and how we’re presenting, and what we’re bringing. But, yeah. I’m also fascinated by this idea of just existing in a space as a signal for who you are. And yeah, I also love that metaphor. And again, this is all stuff that I’m actively thinking about and would love sort of any additional insights, if anyone has thoughts on this, please do share, as well. This is, by no means, just a monologue from my direction.

Frode Hegland: Oh, I think you’re going to get a lot of perspectives. and I will move into… We’re very lucky to have Dene here, who’s been working with electronic literature. I will let her speak for herself, but what they’re doing is just phenomenally important work.

Video: https://youtu.be/i_dZmp59wGk?t=2231

Dene Grigar: Thank you. That’s a nice introduction. I am the managing director, one of the founders, and the curator of The NEXT. And The NEXT is a virtual museum, slash library, slash preservation space that contains, right now, 34 collections of about 3,000 works of born-digital art and expressive writing. What we generally call ‘electronic literature’. But I’ve unpacked that word a little bit for you. And I think this corresponds to a little bit of what you’e talking about in that when we cut when I collect when I curate work I’m not picking particular works to go in The NEXT, I’m taking full collections. So, artists turn over their entire collections to us, and then that becomes part of The NEXT collections. So it’s been interesting watching what artists collect. So it’s not just their own works, it’s the works of other artists. And the interesting, historical, cultural aspect of it is to see, in particular time frames, artists before the advent of the browser, for example, what they collected, and who they were collecting. Michael Joyce, Stuart Moulthrop, Voyager, stuff like that. Then the Web, the browser, and the net art period, and the rise of Flash, looking to see that I have five copies of Firefly by Nina Larson because people were collecting that work. Jason Nelson’s work. A lot of his games are very popular. So it’s been interesting to watch this kind of triangulation of what becomes popular, and then the search engine that we built pulls that up. It lets you see that, “Oh, there’s five copies of this. There’s three copies of that. Oh, there’s seven versions of Michael Joyce’s afternoon, a story.” To see what’s been so important that there’s even been updates, so that it stays alive over the course of 30 years. One other thing I’ll mention, back to your early comment, I have a whole print book library in my house, despite the fact I was in a flood in 1975 and lost everything I owned, I rebuilt my library and I have something like 5,000 volumes of books, I collect books. But it’s always interesting for me, to have guests at my house and they never look at my bookshelf. And the first thing I do when I go to someone’s house, I see books is like, “Oh, what are you reading? What do you collect?” And so, looking at having The NEXT and all that 3,000 works of art and then my bookshelf, and realising that people really aren’t looking and thinking about what this means. The identity for the field, my own personal taste, I call it my own personal taste, which is very diverse. So, I think there’s a lot to be said about people’s interest in this. And I think it’s that kind of intellectual laziness that drives people to just allow themselves to be swept away by algorithms, and not intervene on their own and take ownership over what they’re consuming. And I’ll leave it at that. Thank you.

Jad Esber: Yeah, I love that. Thank you for sharing. And that’s a fascinating project, as well. I’d love to dig in further. I think you bring up a really good point around shared interests being really key and connecting the right type of folks, who are interested in exploring each others libraries. Because not everyone that comes into my house is interested in the books that I’m reading, because, perhaps, they’re from a different field, they’re just not as curious about the same fields. But there is a huge amount of people that potentially are. I mean, within this group, we’re all interested in similar things. And we found each other through the internet. And so, there is this element of, what if the people walking into your library, Dene, are also folks that share the same interests as you? That would actively look and browse through your library and are deeply interested in the topics that you’re interested in so there is something to be said around how can we make sure that people that are interested in the same things are walking into each others’ spaces? And the interest-based graphs exist on the Web. Thinking about who is interested in what, and how can we go into each others’ spaces. And browse, or collecting, or curating, or creating is a part of what many algorithms try to do, for better or for worse. But sometimes leave us in echo chambers, right? And we’re in one neighbourhood and can’t leave, and that’s part of the problem. But yeah, there is something to be said about that. And I think just to go back to the earlier comment that the Dene made around the inspirations behind artists’ work. I would love to be able to explore what inspired my favourite artist’s music, and what went into it and go back and listen to that. And I think, part of again, Web3’s promise is this idea of provenance, seeing how things have evolved and how they’ve become. And crediting everyone in that lineage. So, if I borrowed from Dene’s work, and I built on it, and that was part of what inspired me, then she should get some credit. And that idea of provenance, and lineage, and giving credit back, and building incentive systems that allow people to build works that will inspire others to continue to build on top of my work is a really interesting proposal for the future of the internet. And so, I just wanted to share that as well.

Frode Hegland: That’s great. Anything back from you, Dene, on that? Before we move to Mark?

Dene Grigar: Well, I think provenance is really important. And what I do in my own lab is to establish provenance. Even if you go to The NEXT and you look at the works, it’ll say where we got the work from, who gave it to us, the date they gave it to us, and if there’s some other story that goes with it. For example, I just received a donation from a woman whose daughter went to Brown University and studied under Coover, Robert Coover. And she gave me a copy of some of the early hypertext works, and one was Michael Joyce’s Afternoon Story and it was signed. The little floppy disk was signed, on the label, by Michael and she said, “I didn’t notice there was a signature. I don’t know why there’d be a signature on it.” And, of course, the answer is, if you know anything about the history is that Joyce and Coover were friends, there’s this whole line of this relationship and Coover was the first to review Michael Joyce, and made him famous in the New York times, in 1992. So, I told her that story, and she’s like, Oh, my god. I didn’t know that.” So, just having that story for future generations to understand the relationships, and how ideas and taste evolve over time, and who were the movers and shakers behind some of that interest, so. Thank you.

Bob Horn: Could I ask, just briefly, what the name of your site is or something? Because it went by so fast that I couldn’t even write it down.

Dene Grigar: https://the-next.eliterature.org/. Yeah, and I encouraged you to look at it.

Frode Hegland: Dene, this is really grist for the mill of a lot of what we’re talking about here. Because, with Jad’s notions of identity sharing via the media we consume, and a lot of the visualisations we’re looking at in VR. One of the things we’ve talked about over the last few weeks is guided tours of work where you could see the hands of the author or somebody pointing out things whether it’s a mural, or a book, or whatever. And then, to be able to find a way to have the meta-information you just talked about, be able to enter the room, maybe it could be simply recorded as you saying it, and that is tagged to be attached to these works. Many wonderful layers, I could go on forever. And I expect mark will follow up.

Vidoe: https://youtu.be/i_dZmp59wGk?t=2771

Mark Anderson: Hi. I just think, they’re really reflections, more than anything else. Because one of the things that really brought me up was this idea of books being a performative thing, which I still can’t get my head around. It’s not something I’ve encountered, and I don’t see it reflected in the world in which I live. So maybe a generational drift in things. For instance, behind me you might guess, I suppose, I’m a programmer. Actually what that shows is it’s me trying to understand how things work, and I need them that close to my computer. My library is scattered across the house, mainly to distribute weight through a rather old crumbly Victorian house. So, I have to be careful where we put the bookcases. I’m just, really reflecting how totally alien I find the notion of books, I certainly don’t have… I struggled to think of, I never placed a book with the intention it’ll be seen in that position by somebody else. And this is sort of not a pushback, it’s just my reflection on what I’m hearing. Because I find it very interesting because it had never occurred to me. I never, ever thought of it in those terms. The other sad thing about that means that, so, are the books merely performative? Or the content is there? I mean, one of the interesting thing I’ve been trying to do in this group is trying to find ways just to share the list of the books that are on my shelf. Not because they are any reflection of myself, but literally, I actually have some books that are quite hard to find, and people might want to know that it was possible to find a copy. And whether they need to come and physically see it, or we could scan something. The point is, “No, I have these. This is a place you can find this book.” And it’s interesting that that’s actually really hard to do. Most systems don’t help because, I mean, the tragedy of recommender systems is they make us so inward-looking. So, instead of actually rewarding our curiosity, or making us look across our divides, they basically say, “Right. You lot are a bunch. You go stand over there.” Job done, and (the) recommender system moves on to categorising the next thing. So, if I try to read outside my normal purview, and I’m constantly reflecting on the fact that the recommended system is one step behind saying, “Oh, right. You’re now interested in…” No, I’m not. I’m trying to learn a bit about it. But certainly, this is not my area of interest in the sense that I now want to be amidst lots of people who like this. I’m interested in people who are interested by it, but I think those are two very different things. So, I don’t know the answers, but I just raise those, I suppose, as provocations. Because that’s something that, at the moment, our systems are really bad at allowing us to share content other than as a sort of humblebrag. Or, in your beautifully curated life on Pinterest, or whatever. Anyway, I’ll stop there.

Jad Esber: Yeah, thank you for sharing that. I think it does exist on a spectrum, the identity expressive versus utilitarian need that it solves. But if you take the example of clothing, that might help it a little bit more. So, if we’re wearing a t-shirt, perhaps there’s a utilitarian need, but there is also a performative, or identity expressive need that it solves the way we dress, speaks to who we are as well. So I think the notion of a social object being identity expressive, I think is what I was trying to convey. Think, if you think about magazines on a coffee table. Or you think about the art books that live scattered around your living room, perhaps. That is trying to signal something about yourself. The magazines we read as well. If I’m reading Vogue, I’m trying to say something about who I am, and what I’m interested in reading. The Times, or The Guardian, or another newspaper is also very identity expressive. And taking it out on the train and making sure people see what I’m reading is also identity expressive. So, I think that everything sort of around what we consume and what we wear and what we identify with being a signal of who we are. It’s what I was trying to convey there. But I think you make a very good point. The books next to your computer are there because they’re within reach. You’re writing a paper about something and it’s right there. And so, there is a utilitarian need for the way you organise your bookshelf. The way you organise your bookshelf can be identity expressive or utilitarian. I’ll give you another example. On my bookshelf, I have a few books that are turned face forward, and a few that I don’t really want people to see them, because I’m not really that proud of them. And I have a book that’s signed by the author, I’ll make sure it’s really easy for people to open it and see the signature. And so, there is an identity expressive element to the way I organise my bookshelves as well that’s not just utilitarian. So, I think another point to illustrate that angle.

Mark Anderson: To pull us back to our, and as a sub-focus on AR, VR, it just occurred to me it’s something that, the (indistinct) reminder that Dene was talking about, people don’t look at the bookshelves. I’m thinking, yeah and certainly not saying I miss, and it happens less frequently that the evening ends up with a dinner table just loaded with piles of books that have been retrieved from all over the house and are actually part of the conversation that’s going on. And one thing that some of our new tools would be nice to help us recreate that, especially maybe, if we’re not meeting in the same physical space, is to have that element of recall of these artefacts, or at least some of the pertinent parts of the content they’re in. It would be really useful to have because the fact that you bothered to walk up two flights of stairs or something to go and get some book off the top shelf, because that’s, in a sense, part of the conversation going on, I think is quite interesting and something we’ve sort of lost anyway. I’ll let it carry on.

Frode Hegland: It’s interesting to hear what you say there, Mark, because in the calls we have, you’re the one who most often will, “Look, the book arrived. Look, I have this copy now.” And then we all get really annoyed at you because we have to buy the same damn book. So, I think we’re talking about different ways and to different audiences, not necessarily to dinner guests. But for your community of this thing, you’re very happy to share. Which is interesting it’s also two points, to use my hand in the air here. One of them is, clothing came up as well. And some kind of study, I read showed that, we don’t buy clothing we like, we buy clothing that is the kind of clothing we expect people like us to buy. So, even somebody who is really, “I don’t care about fashion” is making a very strong fashion statement. They’re saying they don’t care. Which is anti-snobbery, maybe. You could say that I’m wondering how that enters into this. But also, when we talk about curation, it’s so fascinating how, in this discussion, music and books are almost interchangeable from this particular aspect. And what I found is, I don’t subscribe to Spotify, I never have, because I didn’t like the way the songs were mixed. But what I do really like, and I find amazing, is YouTube mixes. I pay for YouTube premium so I don’t have the ads. That means I’ll have an hour, an hour and a half, maybe two-hour mixes by DJs who really represent my taste. Which is a fantastic new thing. We didn’t have that opportunity before. So that is a few people. And there, the YouTube algorithm tends to put me in direction of something similar. But also this is for music when I work. It’s not for finding new interesting Jazz. When I play this music, when I’m out driving with my family, I hear how incredibly inane and boring it is. It is designed for backgrounding. So the question then becomes, maybe, do we want to have different shelves? Different bookshelves for different aspects of our lives? And then we’re moving back into the virtuality of it all. That was my hand up. Mark, is your hand up for a new point? Okay, Fabien?

Video: https://youtu.be/i_dZmp59wGk?t=3321

Fabien Benetou: Yeah a couple of points. The first to me, the dearest to me, let’s say, is the provenance aspect. I’m really pissed or annoyed when people don’t cite sources. I would have a normal conversation about a recipe or anything completely casual, doesn’t have to be academic, and if that person didn’t invent it themselves, I’m annoyed if there is not some way for me to look back to where it came from. And I think, honestly, a lot of the energy we waste as a species comes from that. If you’re not aware, of course, of the source, you can’t cite it. But if you learn it from somewhere not doing that work, I think is really detrimental. Because we don’t have to have the same thought twice if we don’t want to. And if we just have it again, it’s just such a waste of resources. And especially since I’m not a physician, and I don’t specialise in memory, but from what I understood, source memory is the type of memory where you recall, not the information, but where you got it from. And apparently, it’s one of the most demanding. So for example, you learn about, let’s say, a book, and you know somebody told you about that book, and that’s going to be much harder but eventually, if you don’t remember the book itself, but the person who told you about it, you can find it back. So, basically, if as a species, we have such a hard time providing sources and understanding where something comes from, I think it’s really terrible. It does piss me off, to be honest. And I don’t know if metadata, in general, is an answer. If having some properly formatted, any kind of representation of it, I’m not going to remember the ISBN of the book, on the top of my head in a conversation, but I’m wondering in terms of, let’s say if blockchain can solve that? Can Web3 solve it? Especially you mentioned the, let’s say, a chain of value. If you have a source or the reference of somewhere else whose work you’re using, it is fair to reattribute it back. They were part of how you came to produce something new. So, I’m quite curious about where this is going to be.

Jad Esber: Yes, thank you for that question. And, yeah. I think there are a few points. First is, I’m going to just comment really quickly on this idea of provenance. And I want to just jump back to answer some of Frode’s comments, as well. But I think, one thing that you highlighted, Fabien, is how hard it is for us to remember where we learned something or got something. And part of the problem is that, so much of citing and sourcing is so proactive and requires human effort. And if things were designed where it was just built into the process. One of the projects I worked on at YouTube was a way for creators to take existing videos and build on them. So, remixing essentially. And in the process of creating content, I’d have to take a snippet and build on it. And that is built into the creation process. The provenance, the citing are very natural to how I’m creating content. TikTok is really good at this too. And so I wonder if there are, again, design systems that allow us to build in provenance and make it really user-friendly and intuitive to remove the friction around having to remember the source and cite. We’re lazy creatures. We want that to be part of our flow. TikTok duets feature and stitching is brilliant. It builds in provenance into the flow. And so, that’s just one thought. In terms of how blockchains help. So, part of what is a blockchain other than a public record of who owns what, and how things are being transacted. If there was a way if we go back to TikTok stitching, or YouTube quoting a specific part of a video, and building on it, if that chain of events was tracked and publicly accessible, and there was a way for me to pass value down that chain to everyone that contributed to this new creative work, that that would be really cool. And that’s part of the promise. This idea of keeping track of how everything is moving, and being able to then distribute value in an automated way. So, that’s sort of addressing that point. And then really quickly on, Frode, your earlier comments, and perhaps tying in with some of what we talked about with Mark, around identity expression. I think this all comes back to the human need to be heard, and understood, and seen, and there are phases in our life, where we’re figuring out who we are, and we don’t really have our identities figured out yet. So, if you think about a lot of teenagers, they will have posters on their walls to express what they’re consuming or who they’re interested in. And they are figuring out who they are. And part of them figuring out who they are is talking about what they’re consuming, and through what they’re consuming, they’re figuring out their identities. I grew up writing poetry on the internet because I was trying to express my experiences, and figure out who I was. And so, I think what I’m trying to say is that there will be periods of our life where the need to be seen, heard, understood or we’re figuring out, and forming our identities are a bigger need. And so, the identity expressive element of para-socially expressing or consuming plays a bigger part. And then, perhaps when we’re more settled with our identity, and we’re not really looking to perform that, becomes more of a background thing. Although, it doesn’t completely disappear because we are always looking to be heard, seen, and understood. That’s very human. So, I’ll pause there. I can keep going, but I’ll pause because I see there are a few other hands.

Frode Hegland: Yeah, I’ll give the torch to Dave Millard. But just on that identity, I have a four-and-a-half-year-old boy, Edgar, who is wonderful. And he currently likes sword fighting and the colour pink. He is very feminine, very masculine, very mixed up, as he should be. So, it’s interesting, from a parental, rather than from just an old man’s perspective to think about the shaping of identity, and putting our posters and so on. It’s so easy to think about life from the point we are in life, and you’re pointing to a teenage part, which none of us are in. So, I really appreciate that being brought into the conversation. Mr. Millard?

David Millard https://youtu.be/i_dZmp59wGk?t=3770

David Millard: Yeah, thanks, Frode. Hi, everyone. Sorry, I joined a few minutes late, so I missed the introductions at the beginning. But, yeah. Thank you. It’s a really interesting talk. One of the things we haven’t talked about is kind of the opposite of performative expression, which is privacy. One of the things, a bit like Mark, I’ve kind of learned about myself listening to everyone’s talking about this, is how deeply introverted I am, and how I really don’t want to let anybody know about me, thank you very much, unless I really want them to. This might be because I teach social network and media analytics to our computer scientists. So, one of the things I teach them about is inference, for example, profiling, I’m reminded of the very early Facebook studies done in the 2000s, about the predictive power of keywords. So, you’d express your interests through a series of keywords. And those researchers were able to achieve 90% accuracy on things like sexuality. This is an American study, so republican, democratic preferences. Afro-American, Caucasian, these kinds of things. So I do wonder whether or not there’s a whole element to this, which is subversive or exists in that commercial realm that we ought to think about. I’m also struck about that last comment, actually, that you mentioned, which was about people finding their identities. Because I’ve also been involved in some research looking at how kids use social media. And one of the interesting things about the way that children use social media, including some children that shouldn’t be using social media, because they’re pretty 13 or whatever the cut-off date is. Is that they don’t use it in a very sophisticated way. And we were trying to find out why that was because we all have this impression of children as being naturally able. There’s the myth of the digital native and all that kind of stuff. And it’s precisely because of this identity construction. That was one of the things that came out in our research. So, kids won’t expose themselves to the network, because they’re worried about their self-presentation. They’re much more self-conscious than adults are. So they invest in dyadic relationships. Close friendships, direct messaging, rather than broadcasting identity. So I think there’s an opposite side to this. And it may well be that, for some people, this performative aspect is particularly important. But for other people, this performative aspect is actually quite frightening, or off-putting, or just not very natural. And I just thought I wanted to throw that into the mix. I thought it was an interesting counter observation.

Jad Esber: Absolutely. Thank you for sharing that. To reflect on my experience growing up writing online. I wrote poetry, not because I wanted other people to read, it was actually very much for myself. And I did it anonymously. I wasn’t looking for any kind of building of credibility or anything like that. It was for me a form of healing. It was for me a form of just figuring out who I was. But if someone did read my poetry, and it did resonate with them, and they did connect with me, then I welcomed that. So, it wasn’t necessarily a performative thing. But it was a way for me to do something for myself that, if it connected with someone else, that was welcomed. I think to go back to the physical metaphor of a bookshelf. Part of my bookshelf will have books that I’ll present, and have upfront and want everyone to see, but I also have a book box with trinkets that are out of sight and are just for me. And that perhaps there are people who will come into my space and I’ll show them what’s in that box, selectively. And I’ll pull them out, and kind of walk them through the trinkets. And then, I’ll have some that are private, and are not for anyone else. So, I totally agree. If we think about digital spaces, if we were to emulate a bookshelf online, there will be elements, perhaps, that I would want to present to the world outwardly. There are elements that are for myself. There are elements that I want to present in a selective manner. And I think back to Frode’s point around bookshelves for various parts of my identity. I think that’s really important. There might be some that I will want to publicly present, and others that I won’t. If you think about a lot of social platforms, how young people use social platforms, think about Instagram. Actually, on Tumblr, which is a great example, the average user had four to five accounts. And that’s because they had accounts that they used for performative reasons. And they had accounts that they used for themselves. And had accounts for specific parts of their identity. And that’s because we’re solving different needs through this idea of para-socially curating and putting out there what we’re interested in. So, just riffing on your point. Not necessarily addressing it, but sort of adding colour to it.

David Millard: No, that’s great. Thank you. So, you’re right about the multiple accounts thing. I had a student, a few years ago, who’s looking at privacy protection strategies. I’m basically saying, people, don’t necessarily use the preferences on their social media platforms, who can see my stuff. They actually engage differently with those platforms. So they do like that, as you said. They have different platforms, or they have different accounts, for different audiences. They use loads of fascinating stuff, things like social stenography, which is, if they have in-jokes or hidden messages to certain crowds, that they will put in them, their feeds will never miss it. There are all of these really subtle means that people use. I’m sure that all comes into play for this kind of stuff as well.

Jad Esber: Totally. I’ll add to that really quickly. So, if you look at… I did a study of Twitter bios, and it’s really interesting to look at how, as you said, young folks will put very cryptic acronyms that indicate or signal their fanships. They’re looking for other folks who are interested in the same K-pop band, for example. And that acronym in the bio will be a signal to that audience. Like, come follow me, connect with me around this topic, just because the acronym is in there. A lot of queer folks will also have very subtle things in their bios, on their profile to indicate that. But only other queer folks will be aware of. And so, again, it’s not something you necessarily want to be super public and performative about, but for the right folk, you want them to see and connect with. So, yeah. Super interesting how folks have designed their own way of using these things to solve for very specific needs.

Frode Hegland: Just before I let you go, Dave. Did you say steganography or did you say stenography?

David Millard: I think it’s steganography. It’s normally referred to as hiding data inside other data but in a social context. It was exactly what Jad and I was just saying about using different hashtags or just references, quotes that only certain groups would recognise that kind of stuff, even if they’re from Hamilton.

Frode Hegland: Brendan, I see you’re ready to pounce here. But just really briefly, one of the things I did for my PhD thesis is, study the history of citations and references. And they’re not that old. And they’re based around this, kind of, let’s call it, “anal notion” we have today that thing should be in the correct box, in the correct order, if it isn’t, it doesn’t belong in the correct academic discipline. Earlier this morning, Dave, Mark, and I were discussing how different disciplines have different ways of even deciding what kind of publication to have. It’s crazy stuff. But before we got into that, we have a profession, therefore, we need a code of how to do it. The way people cited each other, of course, was exactly like this. The more obscure the better, because then you would really know that your readers understood the same space. So it’ s interesting to see how that is sliding along, on a similar parallel line. Brendan, please. Unless Jad has something specific on that point.

Jad Esber: I was just sourcing a Twitter bio to show you guys. So, maybe, if I find one, I’ll walk through it and show you how various acronyms are indicating various things. And I was just trying to pull it from a paper that I wrote. But, yeah. Sorry, go ahead, Brendan.

Frode Hegland: Okay, yeah. When you’re ready, please put that in. Brendan?

Video: https://youtu.be/i_dZmp59wGk?t=4340

Brendan Langen: Cool. Jad, really neat to hear you talk through, just really everything around identity as a scene online. It’s a point of a lot of the research I’m doing as well. So, interesting overlaps. First, I’ll kind of make a comment, and then I have a question for you that’s a little off base of what we talked about. But the bookshelf, as a representation, is extremely neat to think about when you have a human in the loop because that’s really where contextual recommendations actually come to life. This idea of an algorithm saying that we’ve read 70% of the same books, and I have not read this one text that you have held really near and dear to you might be helpful but, in all honesty, that’s going to fall short of you being able to share detail on why this might be interesting to me. So I guess to, kind of, pivot into a question, one of my favourite things that I read last year was something you did with, I forget the fella’s name, Scott, around reputation systems and novel approach, and so, I’m studying a little bit in this Web3 area, and the idea of splitting reputation, and economic value is really neat. And I’d love to hear you talk a little bit more about ‘Koodos’ and how, either you’re integrating that, or what experiments you’re trying to run in order to bring like curation and reputation into the fold. I guess like, what kind of experiments are you working on with ‘Koodos’ around this reputational aspect?

Jad Esber: Yeah, absolutely. I’m happy to share more. But before I do that, I actually found an example of a Twitter bio, I’ll really quickly share, and then, I’m happy to answer that question, Brendan. So this is from a thing I put together a while ago, and if we look at the username here. So, ‘katie, exclamation mark, seven, four Dune’. So, the seven here actually is supposed to signal to all BTS fans, BTS being a K-pop band that she is part of that group, that fan community. It’s just that simple seven next to her name. Four Dune is basically a way for her to indicate that she is a very big fan of Dune, the movie, and Timothée Chalamet, the actor. And pinned at the top of her Twitter account is this list of the bands or the communities that she stands, stands meaning, being a big fan of. And so, again, sort of like, very cryptically announcing the fan communities she’s a part of just in her name, but also, very actively pinning the rest of the fan communities that she’s a member of, or a part of. But, yeah. I just want to share that really quickly. So, to address, Brendan, your questions, just for folks who aren’t aware of the piece, it’s basically a paper that I wrote about how to decouple reputation from financial gain in system and reputation systems, where there might be a token. So, a lot of Web3 projects promise community contributions will earn you money. And the response that myself and Scott Kominers wrote was around, “Hey, it doesn’t actually make sense for intrinsic motivational reasons, for contributions to earn you money. In fact, if you’re trying to build a reputation system, you should develop a system to gain reputation, that perhaps spins off some form of financial gain.{ So, that’s, sort of, the paper. And I can link it in in the chat, as well, for folks who are interested. So, a lot of what I think about with ‘Koodos’, the company that I’m working on, is this idea of, how can people build these digital spaces that represent who they are, and how can that may remain a safe space for identity expression, and perhaps, even solving some of the utilitarian needs. But then, how can we also enable folks, or enable the system, to curate at large, source from across these various spaces that people are building, to surface things that are interesting in ways that aren’t necessarily super algorithmic. And so, a lot of what we think about the experiments we run around how can we enable people to build reputation around what it is that they are curating in their spaces. So, does Mark’s curation of books in his bookshelf give him some level of reputation in specific fields? That then allows us to point to him as a potential expert on that space. Those are a lot of the experiments that we’re interested in running, just sort of, very high level without getting too in the weeds. But I’m happy to discuss, if you’re really interested in the weeds of all of that, without boring everyone, I’m happy to take that conversation as well.

Brendan Langen: Yeah. I’ll reach out to you because I’m following the weeds there.

Jad Esber: Yeah, for sure.

Brendan Langen: Thanks for the high-level answer.

Jad Esber: No worries, of course.

Frode Hegland: Jad, I just wanted to say, after Bob and Fabien now, I would really appreciate it if you go into sales mode, and really pitch what you’re working on. I think, if we honestly say, it’s sales mode, it becomes a lot easier. We all have passions, there’s nothing wrong with being pushy in the right environment, and this is definitely the right environment. Bob?

Video: https://youtu.be/i_dZmp59wGk?t=4723

Bob Horn: Well, I noticed that your slides are quite visual and that you just mentioned visual. I wonder if, in your poetry life, you’ve thought about broadsheets? And whether you would have broadsheets in the background of coming to a presentation like this, for example, so that you could turn around and point to one and say, “Oh, look at this.”

Jad Esber: I’m not sure if the question is if I… I’m sorry, what was the question specifically about?

Bob Horn: Well, I noticed you mentioned that you are a poet, and poets often, at least in times gone by, printed their poems on larger broadsheets that were visual. And I associated that with, maybe, in addition to bookshelves, you might have those on a wall, in some sort of way, and wondered if you’d thought about it, and would do it, and would show us.

Jad Esber: Yeah. So, the poetry that I used to write growing up was very visual, and it used metaphors of nature to express feelings and emotions. So, it’s visual in that sense. But I am, by no means, a visual artist or not visual in that sense. So, I haven’t explored using or pairing my poetry with visual compliments. Although, that sounds very interesting. So, I haven’t explored that. Most of my poetry is visual in the language that I use. And the visuals that come up in people’s minds. I tend to really love metaphors. Although, I realise that sometimes they can be confining, as well. Because we’re so limited to just that metaphor. And if I were to give you an example of one metaphor, or one word that I really dislike in the Web3 world it’s the ‘wallet’. I’m not sure how familiar you are with the metaphor of a wallet in Web3, but it’s very focused on coins and financial things, like what live in your physical wallet, whereas what a lot of wallets are today are containers for identity and not just the financial things you hold. You might say, ‘Well, actually, if you look into my wallet, I have pictures of my kids and my dog or whatever.’ And so, there is some level of storing some social objects that express my identity. I share that just to say that the words we use, and the metaphors that we use, do end up also constraining us because a lot of the projects that are coming out of the space are so focused on the wallet metaphor. So, that was a very roundabout answer to say that I haven’t explored broadsheets, and I don’t have anything visual to share with my poetry right now.

Video: https://youtu.be/i_dZmp59wGk?t=4938

Bob Horn: What is, just maybe, in a sentence or two, what is Web3?

Jad Esber: Okay, yeah. Sure. So, Web3, in a very short sense, is what comes after Web2, where Web2 is what we as, sort of, the last phase of the internet that relied on reading and writing content. So if you think about Web1 being read-only, and Web2 being read and write, where we can publish as well. Web3 is read-write and on. So, there is an element of ownership for what we produce on the internet. And so, that’s, in short, what Web3 is. A lot of people associate Web3 with blockchains, because they are the technology that allows us to track ownership. So that’s what Web3 is in a very brief explanation. Brendan, as someone who’s deep in this space, feel free to add as well to that, if I’ve missed anything.

Bob Horn: Thank you.

Brendan Langen: I guess the one piece that is interesting in the wallet metaphor is that, I guess, the Web2 metaphor for identity sharing was like a profile. And I guess I would love to hear your opinion on comparing those two and the limitations of what even a profile provides as a metaphor. Because there are holes in identity if you’re just a profile.

Jad Esber: Totally, yeah. Again, what is a profile, right? It’s a very two-dimensional, like… What was a profile before we had Facebook profiles? A profile when you publish something is a little bit of text about you, perhaps it’s a profile picture, just a little bit about you. But what they’ve become is, they are containers for photos that we produce and there are spaces for us to share our interests and we’re creating a bunch of stuff that’s a part of that profile. And so, again, the limiting aspect of the term ‘profile’ exists a lot of on what’s been developed today, again, just hinges on the fact that it’s tied to a username and a profile picture and a little bio. It’s very limiting. I think that’s another really good example. Using the term ‘wallet’ today, again, is limiting us in a similar way to how profiles limited us in Web2. If we were to think about wallets as the new profile. So that’s a really good point I actually hadn’t made that connection, so thank you.

Video: https://youtu.be/i_dZmp59wGk?t=5107

Frode Hegland: Fabien?

Fabien Benetou: Thank you. Honestly, I hope there’s going to be, let’s say, a bridge to the pitch. But to be a little bit provocative, honestly, when I hear Web3, I’m not very excited. Because I’ve been burnt before. I checked bitcoin in 2010 or something like this, and Ethereum, and all that. And honestly, I love the promise of the Cypherpunk movement or the ideology behind it. And to be actually decentralised or to challenge the financial system and its abuse speaks to me. I get behind that. But then, when I see the concentration back behind the different blockchains, most of the blockchains are rougher, then I’m like, “Well, we made the dream”. Again, from my understanding of the finance behind all this. And yet, I have tension, because I want to get excited, like I said, the dream should still live. As I was briefly mentioned in the chat earlier, civilians, capitalism, and the difference between doing something in public, and doing something on Facebook, it’s not the same. First, because it’s not in public, it’s not a proper platform. But then, even if you do it publicly on Facebook, is the system to issue value and transform that to money. And I’m very naive, I’m not an economist, but I think people should pay for stuff. It’s easy. I mean, it’s simple, at least. So, if I love your poetry, and I can find a way that can help you, then I pay for it. There is no need for an intermediary, in between, especially if it’s at the cost of privacy and potentially democracy behind. So that’s my tension, I want to find a way. That’s why I’m also about provenance, and how we have a chain of sources, and we can reattribute people back down the line. Again, I love that. But when I hear Web3 I’m like, “Do we need this?” Or can we can, for example, and I don’t like Visa or Mastercard, but I’m wondering if relying on the centralised payment system is still less worse than a Cypherpunk dream that’s been hijacked.

Brendan Langen: Yeah, I mean, I share your exact perspective. I think Web3 has been tainted by the hyper-financialisation that we’ve seen. And that’s why, when Bob asked what is Web3, it’s just what’s after Web2. I don’t necessarily tie it, from my perspective to crypto necessarily. I think that is a means to that end but isn’t necessarily the only option. There are many other ways that people are exploring, that serve some of the similar outcomes that we want to see. And so, I agree with you. I think right now, the version of Web3 that we’re seeing is horrible, crypto art and buying and selling of NFTs as stock units is definitely not the vision of the internet that we want. And I think it’s a very skeuomorphic early version of it that will fade away and it’s starting to. But I think the vision that a lot of the more enduring projects in the space have around provenance and ownership, do exist. There are projects that exist that are thinking about things in that way. And so, we’re in the very early stages of people looking for a quick buck, because there’s a lot of money to be made in the space, and that will all die out, and the enduring projects will last. And so, I think decoupling Web3 from blockchain, like Web3 is what is after Web2, and blockchain is one of the technologies that we can be building on top of, is how I look at it. And stripping away the hyper-financialisation, skeuomorphic approaches that we’re seeing right now from all of that. And then, recognising also, that the term Web3 has a lot of weight because it’s used in the space to describe a lot of these really silly projects and scams that we’re seeing today. So, I see why there is tension around the use of that term.

Frode Hegland: One of the discussions I had with the upcoming Future of Text work, I’m embarrassed right now, I can’t remember exactly who it was (Dave Croker), but the point was made that, version numbers aren’t very useful. This was in reference to Visual-Meta, but I think it relates to Web2. Because if the change is small you don’t really need a new version number, and if it’s big enough it’s obvious. So, I think this Web3, I think we all kind of agree here, is basically marketing.

Jad Esber: It’s just a term, yeah. I think it’s just a term that people are using to describe the next iteration of the Web. And again, as I said, words have a lot of weight and I’m sure everyone here agrees that words matter. So yeah, I think, when I reference it, usually I’m pointing to this idea of read-write-own. And own being a new entry in the Web. So, yeah.

Bob Horn: I was wondering whether it was going to refer to the Semantic Web, which Tim Berners-Lee was promoting some years ago. Although, not with a number. But I thought maybe they’ve added a number three to it. But I’m waiting for the Semantic Web, as well.

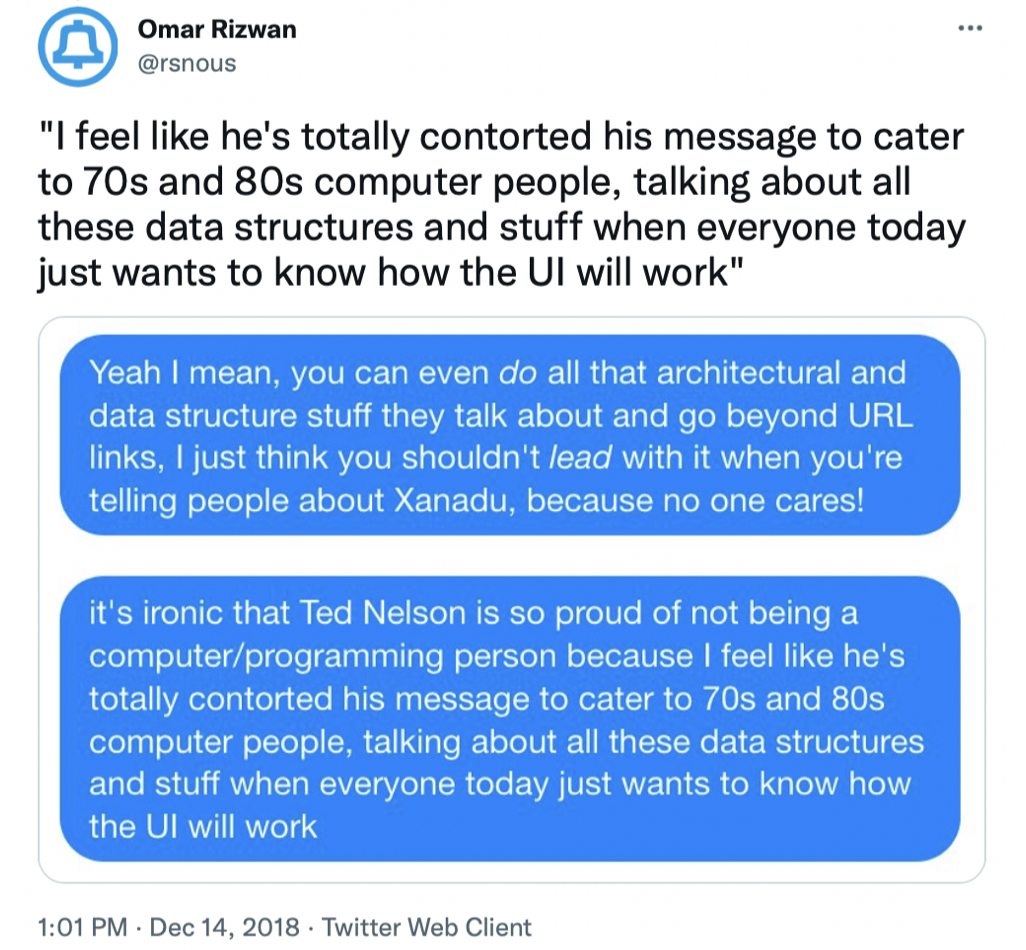

Jad Esber: Totally. I think the Semantic Web has inspired a lot of people who are interested in Web3. So, I think there is a returning back to the origins of the internet, right? Ted Nelson’s thinking as well as a big inspiration behind a lot of current thinking in this space. It’s very interesting to see us loop back almost to the original vision of the Web. Yeah, totally.

Frode Hegland: Brandel?

Video: https://youtu.be/i_dZmp59wGk?t=5497

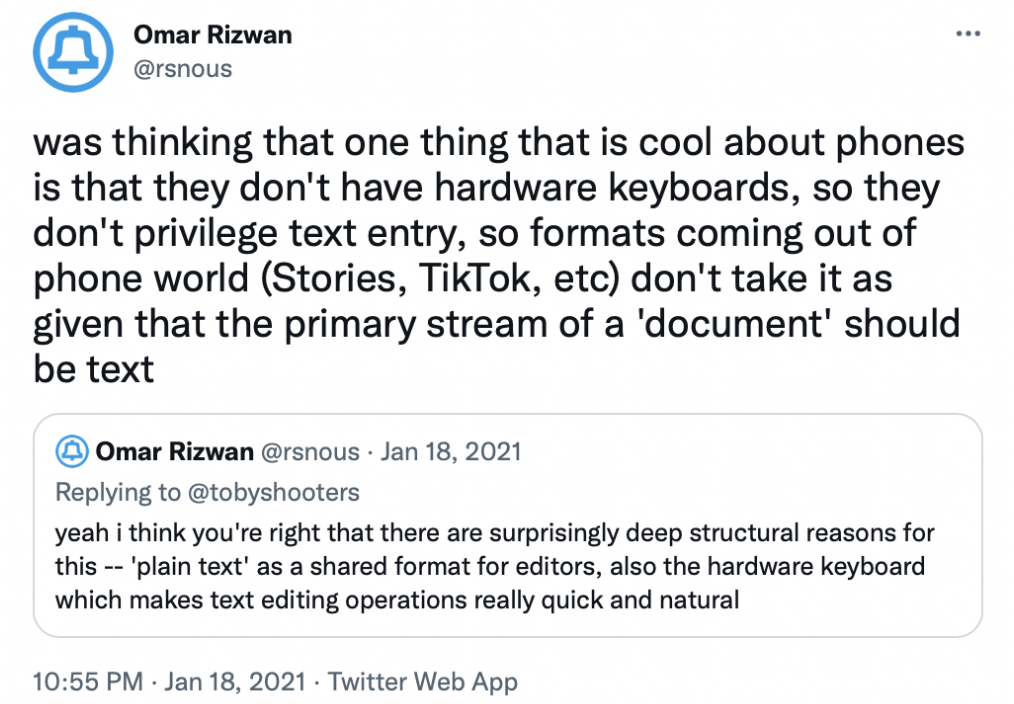

Brandel Zachernuk: You talked a little bit about algorithms, and the way that algorithms select. And painted it as ineffable or inaccessible. But the reality of algorithms is that they’re just the policy decisions of a given governing organisation. And based on the data they have, they can make different decisions. They can present and promote different algorithms. And so that ‘Forgotify’ is a take on upending the predominant deciding algorithm and giving somebody the ability through some measure of the same data, to make a different set of decisions about what to be recommended. The idea that I didn’t get fully baked, that I was thinking about is the way that a bookshelf is an algorithm itself, as well. It’s a set of decisions or policies about what to put on it. And you can have a bookshelf, which is the result of explicit, concrete decisions like that. You can have a meta bookshelf, which is the set of decisions that put things on it, that causes you to decide it. And just thinking about the way that there is this continuum between the unreachable algorithms that people, like YouTube, like Spotify, put out, and the kinds of algorithms internally that drive what it is that you will put on your bookshelf. I guess what I’m reaching for is some mechanism to bridge those and reconcile the two opposite ends of it. The thing is that YouTube isn’t going to expose that data. They’re not going to expose the hyper parameters that they make use of in order to do those things. Or do you think they could be forced to, in terms of algorithmic transparency, versus personal curation? Do you see things that can be pushed on, in order to come up with a way in which those two things can be understood, not as completely distinct artefacts, but as opposite ends of a spectrum that people can reside within at any other point?

Jad Esber: Yeah. You touch on an interesting tension. I think there are two things. One is, things being built, being composable, so people can build on top of them, and can audit them. So, I think the YouTube algorithm, being one example of something that really needs to be audited, but also, if you open it, it allows other people to take parts of it and build on top of it. I think that’d be really cool and interesting. But it’s obviously completely orthogonal to YouTube’s business model and building moats. So composability is sort of one thing that would be really interesting. And auditing algorithms is something that’s very discussed in this space. But I think what you’re touching on, which is a little bit deeper, is this idea of algorithms not capturing emotions, and not capturing the softer stuff. And a lot of folks think and talk about an emotional topology for the Web. When we think about our bookshelf, there are memories, perhaps, that are associated with these books, and there are emotions and nostalgia, perhaps, that’s captured in that display of things that we are organising. And that’s not really very easy to capture using an algorithm. And it’s intrinsically human. Machines don’t have emotions, at least not yet. And so, I think that what humans present is context and that’s emotional context, nuance, that isn’t captured by machine curation. And so, that’s why, in the presentation, I talk a little bit about the pairing of the two. It’s important to scale things using programmatic algorithms, but also humans make it real, they add that layer of emotion and context. And there is this parable that basically says that human curation will end up leading to a need for algorithmic curation. Because the more you add and organise, the more there’s a need for then a machine to go in and help make sense of all the things that we’re organising. It’s an interesting pairing, what balance is important, and it’s an open question.

Frode Hegland: Yeah, Fabien, please. But after that, Brendan, if you could elaborate on what you wrote in the chat regarding this, that would be really interesting. Fabien, please.

Video https://youtu.be/i_dZmp59wGk?t=5836